So, I started thinking: With open source projects being online by default, and with everything else moving online and virtual, what should creators of open source technologies measure as we continue in this COVID and (hopefully soon) post-COVID world?

There are plenty of metrics you can track—stars, forks, pull requests (PRs), merge requests (MRs), contributor counts, etc.—but more data doesn't necessarily mean clearer insights. I've previously shared my skepticism about the value of these surface-level metrics, especially when assessing an open source project's health and sustainability.

In this article, I propose two second-order metrics to track, measure, and continually optimize to build a strong, self-sustaining open source community:

- Breakdowns of PR or MR reviewers

- Leaderboards of different community interactions

Why these metrics?

The long-term goal of any open source community-building effort is to reach a tipping point where the project can live beyond the initial creators' or maintainers' day-to-day involvement. It's an especially important goal if you also are building a commericial open source software (COSS) company around that open source technology, whether it's bootstrapped or venture capital-backed. Your company will eventually have to divert resources and people power to build commercial services or paid features.

It's a lofty goal that few projects achieve. Meanwhile, maintainer burnout is a real problem. The latest and most high-profile example is Redis creator Salvatore Sanfilippo, who shared his struggles as an open source maintainer last year and bowed out as CEO of Redis Labs earlier this year. Maintainers of various projects, big or small, struggle through similar challenges every day.

Focusing on the two metrics above, especially early in your open source journey, can increase your odds of building something sustainable because they illuminate two important elements that drive sustainability: ownership and incentive.

Breaking down PR/MR reviewers = Ownership

Tracking who in your community is actively reviewing contributions is a good indicator of ownership. In the beginning, you, the creator, do the bulk of reviewing, but this will be unsustainable over time. Being intentional about building your community so that more people have the contextual knowledge, confidence, and welcoming attitude to review incoming PRs/MRs (which should increase in frequency as your project gains traction) is crucial to long-term sustainability.

There's a customer service-like element to reviewing contributions, which can deteriorate if not enough people feel ownership to do it. And one of the worst signals you can give to an otherwise enthusiastic newcomer is a PR or MR left unattended for two or more weeks.

Tracking a few community-facing interactions and possibly gamifying them within your community with rewards can help drive the right incentives and behaviors among community members with all levels of experience. Some of the interactions you can track are the number of PRs/MRs filed, comments, reactions, and reviews (which has some overlap with reactions). These interactions may have different values and quality, but the bigger goal here is to understand who's doing what, who's good at doing what, and intentionally foster more behaviors based on people's strengths and interests.

Perhaps some people are great at providing helpful comments but don't have enough context (yet) to review a PR/MR. It would be good to identify who they are and provide them with more info so that they can become a reviewer one day. Perhaps some people are super-engaged with monitoring the project, as shown by their frequent reactions, but don't feel comfortable chiming in with comments and suggestions. It would be good to know who they are and help them gain more context into the project's inner workings, so they can add more value to a community they clearly care about.

How to use the metrics

So how can you track and read into these metrics? I'll illustrate with an example comparing two open source projects: Kong, an API gateway, and Apache Pulsar, a pub-sub messaging system.

In May 2020, I used Apache Superset, an open source data visualization tool, along with a tutorial written by Max Beauchemin, one of the project's creators, to crawl and visualize some data from Kong, Apache Pulsar, and a few other projects. Using the tutorial, I crawled two years' worth of data (from May 2018 to May 2020) to see changes over time. (Note: The tutorial is an example of Superset's own data with some hard-coded linkage to its history inside Airbnb and Lyft, so to use it, you will need to do some customization of the dashboard JSON to make it work more generally.)

Two caveats: First, the data was last gathered in May, so it will surely look different today. Second, the projects were open sourced at different times, and this comparison is not a judgment on the current state or potential of either project. (Kong's first commit was in November 2014, and Pulsar's was in September 2016. Of course, both were worked on in private before being open sourced.) Some of the differences you see may simply be due to the passage of time and community effort paying off at different times; community building is a long, persistent slog.

PR reviewer metrics

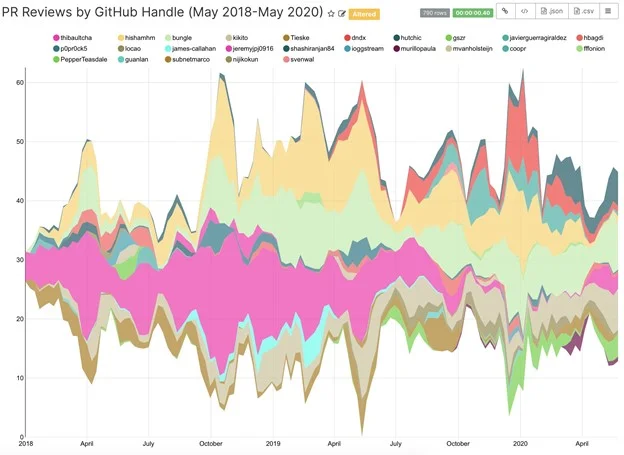

The following two charts show Kong and Pulsar's PR reviews by GitHub handle:

Image by Kong PR reviews by GitHub handle (COSS Media ©2020)

Image by Kong PR reviews by GitHub handle (COSS Media ©2020)

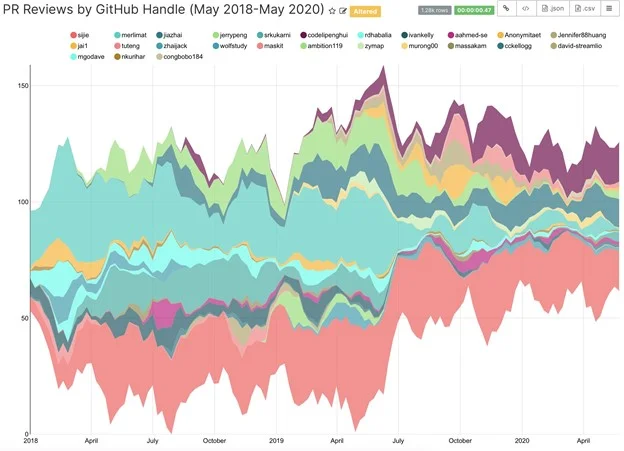

Pulsar's PR reviews by GitHub handle (COSS Media ©2020)

Pulsar's PR reviews by GitHub handle (COSS Media ©2020)

Based on this data, I observe that Kong has a better balance of people reviewing PRs than Pulsar has. That balance was achieved over time as the Kong project grew in maturity. The most important thing to note is that neither of Kong's creators, Aghi nor Marco, is anywhere close to being the top reviewers. That's a good thing! They certainly were during the early days, but their involvement became less visible as the project matured, as it should.

As a younger project, Pulsar isn't at the same level yet but is on its way to achieving that balance. Sijie, Jia, and Penghui are doing the bulk of the reviewing for now; all three are project management committee members and lead SteamNative, Pulsar's COSS company. Other major players, including Splunk (especially after it acquired Streamlio), also contribute to the project, which is a good leading indicator of eventually achieving balance.

One factor I'm intentionally glossing over is the difference in the governance process of an Apache Software Foundation project and a non-ASF project, which would impact the speed and procedure for a contributor to become a reviewer or maintainer. In that regard, this comparison is not 100% apples-to-apples.

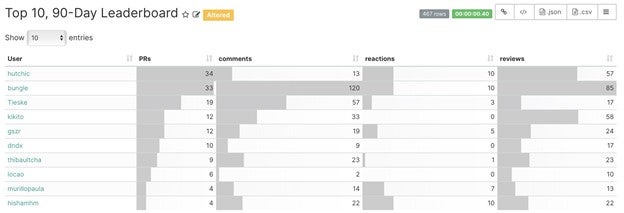

Following are two leaderboard charts showing Kong and Pulsar's community interactions by GitHub handle in the 90 days since the most recent data gathered (roughly from March to May 2020):

Kong's 90-day leaderboard chart (COSS Media ©2020)

Kong's 90-day leaderboard chart (COSS Media ©2020)

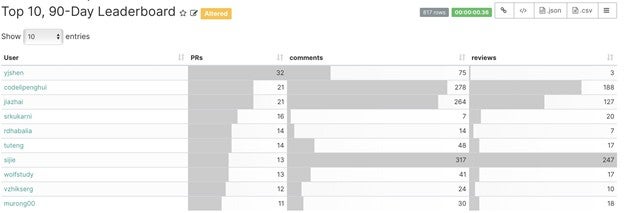

Pulsar's 90-day leaderboard chart (COSS Media ©2020)

Pulsar's 90-day leaderboard chart (COSS Media ©2020)

These leaderboards reflect the same "level of balance" shown in the previous chart, which makes sense. The more interesting takeaway is who is doing what kind of interaction, how much interacting is happening, and who seems to be doing it all (Sijie!).

As far as building the right incentive structure, this leaderboard-type view can help you get a sense of where interactions are coming from and what sort of reward or motivational system you want to design to accelerate positive behaviors.

This data is also useful for internal management and external community building. As close watchers can tell, many of the leaders on these charts are employees of the projects' COSS companies, Kong Inc. and StreamNative. It's quite common for active community contributors (or enthusiasts) to become employees of the company commercializing the project. Regardless of where these folks are employed, to foster a sustainable project beyond its initial creators (which in turn would influence the sustainability of the COSS company), you would need to measure, track, and incentivize the same behaviors.

Also, I'm aware that the Pulsar chart does not have a "reactions" column, which could either be because there wasn't much reaction data to merit a top-10 entry or due to my own faulty configuration of the chart. I apologize for this inconsistency.

Tips on driving balanced sustainability

No matter how pretty the charts are, data doesn't tell the whole story. And no matter how successful a particular project is, its experience should not be templatized and applied directly to a different project. That said, I'd like to share a few actionable tips I've seen work in the wild that can help you improve these two metrics over time:

- Provide high-quality documentation: In a previous article, I focused on documentation, and I encourage you to give it a read. The most important point is: a project's documentation gets the most amount of traffic. It's the place where people decide whether to continue learning about your project or move on. You never want to give people a reason to move on. Generally, I recommend spending 10% to 20% of your time writing documentation. Putting it in context: if you are working on your project full-time, it's about half a day to one full day per week.

- Make your contributor guide a living document: Every project has a CONTRIBUTING.MD document (or a variation of it). But projects that grow and mature make that document the best it can be and continually refresh, update, and refine it. Why? Because that's where people who are enthusiastic about participating in the project go. If the guide is clear, succinct, and actionable, you'll get contributions in no time. If it's outdated, convoluted, and lacks an easy onramp (e.g., beginner issues, simple bugs, etc.), then that enthusiasm will go somewhere else. Make your CONTRIBUTING.MD a killer feature.

- Set clear quality guidelines: There is such a thing as a "noisy contribution"—low-quality PRs/MRs that require more energy than it's worth to review (and oftentimes reject). However, the responsibility of communicating to the public what is and is not a "quality contribution" is on you, the creator or maintainer, because you have the most context and institutional knowledge about the project, by far. Make your expectations clear, and don't compromise on quality. Make it a prominent section of your CONTRIBUTING.MD or a separate document to give it more visibility. Combine it with your project's roadmap, which should be part of your public documentation anyway, so your new contributors know what's expected of them and why.

I hope this provides some useful insight into the two second-order metrics that matter when building a sustainable open source community and some actionable items you can use to track and improve them.

Author Bio

Kevin Xu is currently an Entreprenuer-in-Residence at OSS Capital. He writes weekly about the broader technology industry on Interconnected. He was previously General Manager of Global Strategy and Operations at PingCAP, a commercial open source company behind the NewSQL database TiDB…[More]

This article originally appeared on COSS Media and is republished with permission.

This article was published in Opensource.com. It is republished by Open Health News under the terms of the Creative Commons Attribution-ShareAlike 4.0 International License (CC BY-SA 4.0). The original copy of the article can be found here.